Contents

You’ve likely come across both the terms “content filtering” and “content moderation” and seen them used almost synonymously. But while they are related they are not the same thing.

Let’s dive into what sets them apart and how they complement each other, allowing platforms that rely on user-generated content to scale to new heights.

The difference between content filtering and moderation

So what are the actual differences between content filtering and content moderation?

- Content filtering is your platform’s automated gatekeeper. It’s an automated process that scans and filters content based on predefined criteria like keywords, source validation, image content, and other rules you have set up. Whether it’s blocking spam or explicit material, content filtering quickly catches any unsavory or dangerous content before it reaches the end user. Usually, a variety of different filters work in tandem.

- Content moderation is a broader process encompassing filtering, reviewing, and managing content to ensure it conforms to your platform’s policies. This can be done by human eyes, automated systems, or a mix of both, providing a more nuanced review of content and its context.

Content filtering is, in other words, a subset of the content moderation process.

For example, here at Besedo, we provide multiple automated systems for various forms of content filtering for our clients. This can then be complemented by additional content moderation done in-house by a client’s team or outsourced to us.

AI-assisted content moderation and filtering is becoming increasingly common and something we have worked with for years. It is amazing for automating an increasingly large part of the process, which becomes important at scale.

How they interact for scalability

Content moderation at scale would be impossible without efficient content filtering. Without the automation that filters provide, the moderation process’s more resource and time-consuming aspects would quickly become untenable.

Content filtering takes care of the clear-cut cases, handling them quickly and efficiently, while moderation allows for more nuanced or context-dependent scenarios. Together, they form a robust defense line, ensuring your end users a safe and pleasant experience.

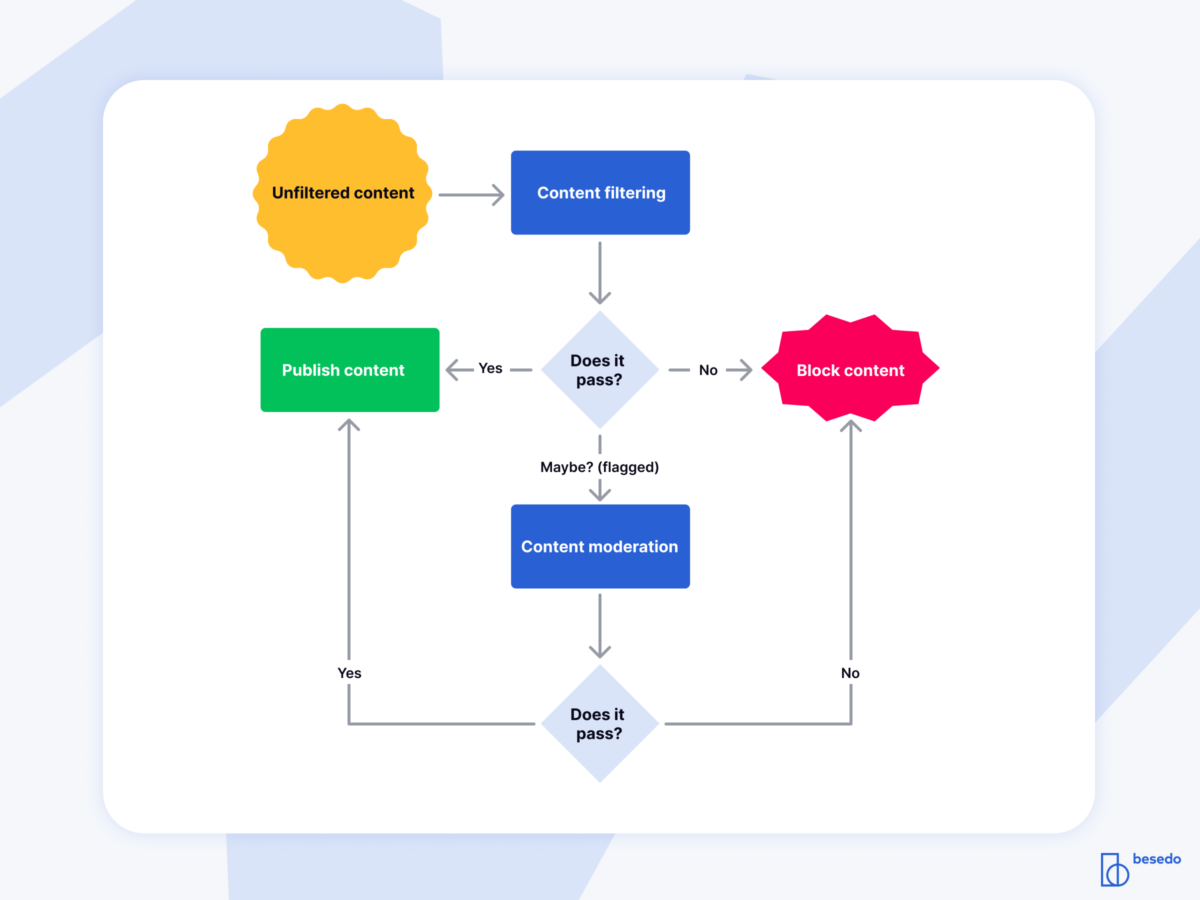

Below is a simplified overview of how the content moderation process goes from “input to output” for user-generated content.

When there is uncertainty about what decision to make about a piece of content, only then should it be flagged and escalated to a manual process with a human involved, usually a professional content moderator. For example, here at Besedo, content moderators have a dedicated user interface where they can quickly and easily review any flagged content and take appropriate actions.

Content filtering examples

There are a wide variety of content filters, and the more you look, the more you can find. In the realm of content moderation these are some of the most common types:

- Profanity filters

- Nudity detection

- Spam filters

Any moderation system worth its salt has a whole battery of different content filters. They are often highly customizable and can be adapted to the specific needs of the platform they are running on.

They are perfect for large-scale automated filtering. Add some clever use of AI and even more advanced decisions can be automated. And automation = speed, which is great for the user experience.

What you want: Efficient content filtering and smart content moderation

Content moderation can be a tricky challenge. We know. We have been in the industry for over 20 years now, advising and working with lots of companies to help them filter and moderate content at scale.

Are you running an online service, marketplace, or any platform overflowing with user-generated content? You probably have some form of content moderation system in place, but if we may be so bold, our content moderation tools and services might be able to greatly simplify your life.

Please feel free to get in touch if you’d like to learn more.

Ahem… tap, tap… is this thing on? 🎙️

We’re Besedo and we provide content moderation tools and services to companies all over the world. Often behind the scenes.

Want to learn more? Check out our homepage and use cases.

And above all, don’t hesitate to contact us if you have questions or want a demo.