Most Recent Articles

Online Gaming Safety: 9 in 10 Gamers Wouldn’t Let Their Kid Play

We surveyed 2,000 gamers from all over the USA about their experiences in online multiplayer games. The results were eye-opening.

Can Dating Apps Keep Women Safe?

Protect women at all costs. Online dating must be safe. Learn how AI and moderation can help create a better experience this International Women’s Day.

Report: How Dating App Chats Are Driving Users Away

Dating app users are frustrated with in-app messaging. Our survey reveals why people are ghosting. Not just matches, but the apps themselves

Data on Reddit’s massive amounts of user-generated content and how it is moderated

How does Reddit moderate billions of posts? Discover its AI-powered AutoModerator, 60K+ community mods, and scalable content moderation strategy.

Besedo Recruits Chief Executive Louise Barnekow

Besedo is delighted to announce the appointment of Louise Barnekow from Mynewsdesk as the company’s new CEO.

Words Matter: Why ‘Pig Butchering’ Is the Wrong Term for Online Scams

Language shapes reality—and your scam terminology might be helping fraudsters. Learn why Interpol is pushing for change, and what you need to do about it.

The Black Friday Online Shopping Boom Means a Big Rise in Stolen Packages

Package theft surges during Black Friday season, with 58 million Americans affected. Learn the startling statistics and how retailers are fighting back against porch pirates.

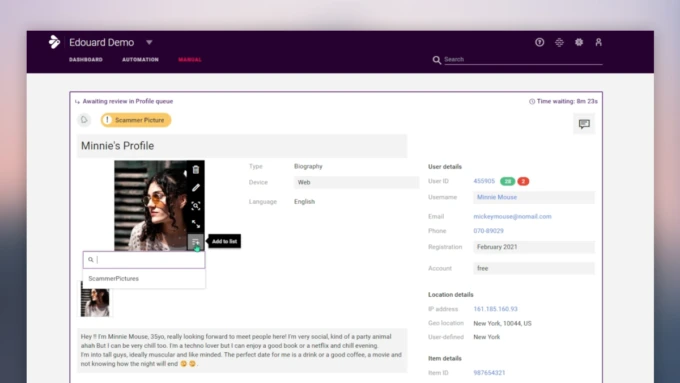

Outsmart Scammers with Implio’s Smart Lists

Simplify image moderation with Implio’s Smart Lists. Quickly flag and block altered images to outsmart scammers and protect your platform.

Build vs. Buy: Should You Outsource Content Moderation?

Learn if outsourcing content moderation is right for you in our free ebook. Real-world data and strategies for a safer, cost-effective platform await.