Contents

To reach high accuracy and precision, your content moderation team tools that provide them with enough insights to make the right decisions.

“It is a capital mistake to theorize before one has data. Insensibly one begins to twist facts to suit theories, instead of theories to suit facts.”

— Sir Arthur Conan Doyle, through Sherlock Holmes

With that in mind, we are developing Implio to present as much relevant data as possible to users when faced with a moderation decision. The keyword here is relevant. We want to ensure that key information isn’t drowned out by filler data but also that moderators have easy access to earlier conclusions and historical data pertaining to the person or item they are reviewing.

Our most recent step on the road to full representation is Implio’s newest feature: moderation notes.

With Moderation Notes, moderators can share insights about end users and the content items they post. These insights then automatically appear next to items being reviewed, as relevant.

Moderation notes are for instance very powerful in fighting fraud. When a moderator rejects an item as fraudulent, they can leave a note stating exactly that and even add additional information. Next time an item comes in from the same user or from the same IP address or email, the next moderator in charge will see the note that was left behind and know that they should be extra diligent when reviewing the item.

How to leave notes

The more your team uses notes, the more powerful they become.

Once you start using the feature ask your team to start leaving notes with insights that contributed to their moderation decision or that may be useful in the future.

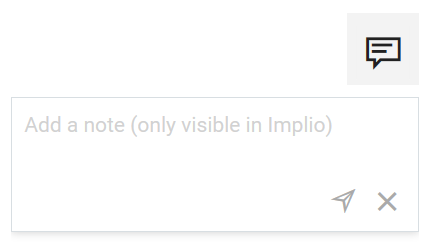

Notes can be left by clicking the note icon located in the top-right corner of an item.

Clicking that icon reveals a text field which allows you to leave a note, up to 2,000 characters long:

You can create as many notes as you need to, but notes cannot be edited or deleted. This is to ensure that important data isn’t accidentally removed.

How does moderation notes work?

Implio will look for any relevant notes for any incoming item in a moderation queue and display them.

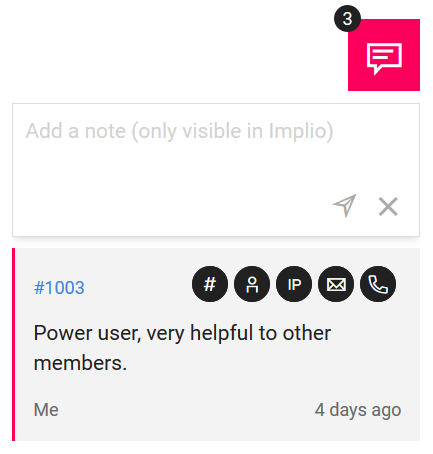

This happens for any note left on an item sharing one or more of the following attributes with the item currently being reviewed:

- same item ID

- same user ID

- same IP address

- same email address

- same phone number

Attributes in common between the note and the item being reviewed are symbolized by icons displayed above the note itself.

If the icon is greyed out it means, there’s no relation between that specific data point and the item being reviewed.

For instance, if the name and email are different, but the IP and phone number are the same you will see the former greyed out while the latter will be highlighted.

The moderator who left the note and the date at which it was left are indicated below the note.

It’s important to consider that moderation notes are to be used as additional information to help moderators take the right decision. On their own they are not enough to give a full picture of the user and their actions. They are however an important piece of the puzzle when dealing with grey area cases and a powerful complement to existing insights.

There’s more to come

This is the first version of the moderation notes feature, but we have big plans on how to make it an even better tool in our ongoing objective to improve efficiency and accuracy.

“A feature like moderation notes might sound simple, but used collaboratively in moderation teams, it can be incredibly powerful.

We’ve designed the feature around the needs from our customers, with a strong focus on ease of use. But we’ve also looked forward ensuring that notes can be leveraged by other parts of Implio, to make it even more useful.

The next step is to have automation rules make use of moderation notes. For instance, by automatically sending new contents for manual review if the user has received a note with a specific keyword like ‘fraud’ in the past.”

– Maxence Bernard, Chief R&D Officer at Besedo

Related articles

See allSee all articlesThis is Besedo

Global, full-service leader in content moderation

We provide automated and manual moderation for online marketplaces, online dating, sharing economy, gaming, communities and social media.